WebDevPro #134: Rendering in Next.js is a system of trade-offs, not a feature checklist

Crafting the Web: Tips, Tools, and Trends for Developers

Grow your mobile apps efficiently, without ads!

Do you rely on paid ads for mobile growth? Don’t the rising costs and limited visibility make it harder to scale?

Insert Affiliate gives you another option.

It lets you run an affiliate channel for your app, where partners, creators, or communities drive users via tracked links, and you can tie installs, purchases, and subscriptions back to the source.

Welcome to this week’s issue of WebDevPro!

Most of us think rendering strategies were a matter of picking the “best” option.

Server-side rendering felt powerful. Static generation felt fast. Client-side rendering felt flexible. The assumption was simple: choose one, commit, and move on.

That mental model breaks down the moment your application grows beyond a few pages.

In practice, rendering is not a decision you make once. It is a system of trade-offs you keep revisiting as your product evolves. Next.js does not force you into one approach. It gives you multiple levers and expects you to use them deliberately.

The real shift is not technical. It is conceptual. You stop asking “Which rendering strategy should I use?” and start asking “Where should this piece of UI live, and when should it be rendered?”

Before we get into it, here’s this week at a glance:

The illusion of a single rendering strategy

Traditional frameworks leaned heavily in one direction.

Server-rendered apps generated HTML on every request. Client-rendered apps shipped a JavaScript bundle and built everything in the browser. Static site generators pushed everything to build time. Each model worked well in isolation. Each also came with blind spots.

Next.js breaks that boundary. You can render some pages at request time, others at build time, and still defer specific components to the client. This flexibility is powerful, but it also introduces a new responsibility: understanding the cost of each choice.

Rendering is no longer about capability. It is about trade-offs across performance, scalability, user experience, and operational complexity.

Server-side rendering is about control, not just freshness

Server-side rendering still plays a critical role, especially when the content depends on the request itself.

When a page is rendered on the server for every request, you gain control over what gets sent to the browser. You can safely access private APIs, validate data, and tailor responses per user. The browser receives fully formed HTML, which improves compatibility and helps search engines understand your content immediately. That control comes at a cost.

Every request triggers computation. Every data dependency introduces latency. Even a well-optimized server will feel slower compared to serving a prebuilt file. Navigation between pages can feel heavier because each transition depends on server work.

There is also an operational dimension. A server-rendered app is not just code. It is infrastructure. It scales with traffic, and that scaling has a cost.

SSR shines when you genuinely need per-request freshness or personalization. It becomes a liability when used out of habit.

A common mistake is defaulting to SSR for anything that “feels dynamic.” In reality, many of those pages do not need to be recomputed on every request. They only need to feel dynamic.

Client-side rendering shifts responsibility to the browser

Client-side rendering takes the opposite approach. Instead of sending meaningful HTML, the server delivers a minimal shell and a JavaScript bundle. The browser then builds the interface, fetches data, and manages state.

This model feels fast after the initial load. Once the application is hydrated, navigation becomes seamless. Transitions are smooth. Interactions feel immediate. The app behaves more like a native experience. There is a reason this approach became popular.

It reduces server workload significantly. The server becomes a delivery mechanism rather than a computation layer. You can scale more easily, especially in serverless environments. You also gain flexibility in how and when you fetch data.

But the trade-offs are hard to ignore. The first load can be painfully slow on weak networks. Users may stare at an empty screen while JavaScript downloads and executes. Search engines see very little initial content, which can impact discoverability and performance scores .

Client-side rendering works well when SEO is not a priority and when interactivity outweighs initial load performance. Think dashboards, internal tools, or authenticated user spaces.

Even then, it should be a deliberate choice, not a default fallback.

Static generation optimizes for speed and scale

Static site generation flips the model again. Instead of rendering on the server per request or in the browser at runtime, you render at build time. The output is a static HTML file that can be served instantly.

This approach is hard to beat in terms of performance. There is no computation at request time. The server simply returns a file. CDNs cache and distribute it globally. Latency drops dramatically. Scalability becomes almost trivial .

It is also inherently secure. There are no runtime calls to sensitive APIs. No database queries during requests. Everything needed is already embedded in the generated output.

The limitation is obvious. Static content does not change unless you rebuild. That constraint used to make static generation impractical for dynamic applications. Rebuilding an entire site for a small content change is inefficient and often unrealistic. Next.js softens that limitation through incremental static regeneration.

Incremental regeneration changes the conversation

Incremental static regeneration introduces a middle ground. You can generate a page once and then update it periodically without rebuilding the entire application. Instead of choosing between fully static and fully dynamic, you define how stale your data can be.

A page might be regenerated every few minutes, hours, or based on traffic patterns. The first request after the revalidation window triggers a new render, and subsequent users receive the updated version .

This model shifts the question from “Is this page static or dynamic?” to “How fresh does this data need to be?”

Many applications do not require real-time updates. A slight delay is acceptable if it significantly improves performance and reduces load. ISR lets you capture that balance without overcommitting to server-side rendering.

Rendering decisions are architectural decisions

At an intermediate level, the real challenge is not understanding how each strategy works. It is knowing where to apply them.

Rendering is tightly coupled with architecture. A marketing page benefits from static generation because it rarely changes and needs to load instantly. A product listing page might use a mix of static generation and periodic revalidation. A user dashboard leans toward client-side rendering for responsiveness. A checkout flow may rely on server-side rendering for security and consistency.

These are not isolated decisions. They influence data flow, caching strategies, and even how teams structure their codebase. Rendering becomes part of the system design, not just a framework feature.

The hidden cost of getting it wrong

Misusing rendering strategies can degrade your application. Overusing server-side rendering can lead to unnecessary latency and higher infrastructure costs. The app works, but it feels slower than it should.

Relying too heavily on client-side rendering can hurt first impressions. Users wait longer. Search engines struggle to index content. Performance scores drop. Using static generation without a plan for updates can create stale experiences. Content lags behind reality. Users notice.

The tricky part is that each of these issues emerges gradually. They rarely show up in small projects or local environments. They appear under real traffic, real data, and real user expectations. That is why rendering decisions deserve more attention than they usually get.

Hybrid rendering is not a feature but a mindset

Next.js is often described as a hybrid framework. That description is accurate but incomplete.

A single page can combine multiple approaches. The shell might be statically generated. Critical data might be fetched on the server. Interactive components might rely on client-side rendering.

Each part of the page is treated independently based on its requirements. This is where the framework becomes powerful. You are not forced into a single pattern. You can optimize each piece of the experience.

The challenge is maintaining clarity. Without clear boundaries, hybrid approaches can become messy. It is easy to lose track of where data is fetched, where rendering happens, and why certain decisions were made.

What developers often miss

At this stage, most developers understand the mechanics of SSR, CSR, and SSG. The gap is usually in decision-making.

Three patterns tend to show up repeatedly.

The first is defaulting to server-side rendering for anything dynamic. It feels safe, but it often introduces unnecessary overhead.

The second is treating client-side rendering as a performance solution. It improves interactions but can hurt initial load and SEO.

The third is underestimating static generation. Many pages that could be static end up being rendered dynamically simply because it feels easier.

These patterns become problematic when applied without context.

A more practical way to think about rendering

Instead of categorizing pages by rendering type, it helps to evaluate them through a few simple questions:

How often does this data change?

Does this content need to be indexed by search engines?

How important is initial load performance?

Does this page require per-user personalization?

What is the acceptable level of staleness?

These questions lead you toward a more balanced approach.

A page that rarely changes and needs strong SEO leans toward static generation. A page with user-specific data leans toward server or client rendering. A page with moderate updates fits well with incremental regeneration.

Rendering stops being a technical choice and becomes a product decision.

The real takeaway

Rendering strategies are not competing features. They are tools with different costs and benefits.

Next.js gives you the ability to combine them, but it does not make the decisions for you. That responsibility sits with the developer.

When you align your rendering choices with the needs of your application, performance improves, infrastructure becomes more efficient, and the user experience feels intentional.

When you do not, the issues show up slowly and compound over time. Rendering is one of those areas where small decisions have outsized impact. It is worth treating it as a first-class concern, not an afterthought.

Enjoyed this piece? Take it further with Real-World Next.js by Michele Riva, and learn how to build scalable, high-performance modern web applications.

Building with AI? We want to hear from you 👀

Take our quick survey and help us shape content around real workflow bottlenecks.

This Week in the News

🧠 Copilot now asks another AI before trusting itself: GitHub is experimenting with a “second opinion” system inside Copilot CLI. A separate model from a different family reviews the agent’s plan and code to catch blind spots and edge cases before execution. The bigger shift is architectural. AI tools are starting to validate each other, not just generate output. One model writes, another critiques, and the developer sits above both.

🔐 Cloudflare just moved up the deadline for quantum-safe internet: Cloudflare is accelerating its roadmap to make the entire platform post-quantum secure by 2029, including authentication, not just encryption. The urgency comes from recent breakthroughs suggesting current cryptography could be broken sooner than expected. The takeaway is simple. Quantum risk is no longer theoretical, and migration timelines are shrinking fast.

⚙️ Node.js keeps shipping under-the-radar improvements: Recent updates to the current release line focus on better memory control, improved test runner capabilities, and incremental API enhancements. Nothing headline grabbing, but that is the point. Node is doubling down on stability and developer ergonomics rather than chasing trends.

📦 A new way to explore npm without the CLI: Patak and Zeu introduced npmx, a fast, browser-based interface for exploring the npm ecosystem. It focuses on speed, discoverability, and a cleaner way to navigate packages without relying on terminal workflows. The idea is simple but interesting. As ecosystems grow, developer tooling is shifting from command-heavy interfaces to faster, more visual ways of understanding what’s out there.

Beyond the Headlines

⚠️ How attackers are now targeting open source maintainers: Simon Willison breaks down a new wave of supply chain attacks that rely on social engineering, not exploits. Maintainers are being manipulated into merging malicious code through trust, urgency, and subtle persuasion. The pattern is uncomfortable but clear. The weakest point in the supply chain is no longer code. It is the human reviewing it.

🧩 Claude is not your software architect: This piece challenges a growing assumption that AI can design systems end-to-end. While models can generate code and suggest structures, they lack the context needed for real architectural decisions. The distinction matters. AI can assist implementation, but architecture still depends on trade-offs, constraints, and long-term thinking.

🔐 Your email is still easy to scrape: Email obfuscation techniques that used to work are now trivial to bypass. This article shows how bots extract addresses even from “protected” formats and what actually works today. The takeaway is practical. Security through obscurity is fading fast, especially when automation keeps improving.

🎥 The React hook most developers still ignore: useSyncExternalStore solves a specific but important problem: syncing external state with React without breaking consistency. It rarely shows up in everyday code, which is why many developers overlook it. But once you start working with shared state across systems, it becomes one of those tools that quietly fixes hard-to-debug issues.

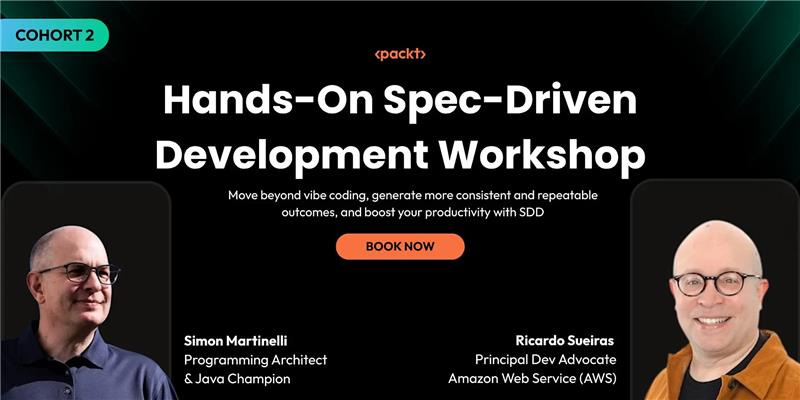

Stop Prompting. Start Speccing.

After a hugely successful Cohort 1, we’re back with the Cohort 2 hands-on workshop!

Here you’ll build a real full-stack application using the same spec-first methodology used by MAANG engineering teams.

Tool of the Week

🦴 Generate skeleton screens directly from your DOM

Boneyard takes a snapshot of your existing DOM and automatically generates pixel-perfect skeleton screens. Instead of manually building placeholders, it mirrors your actual layout, saving time and keeping loading states visually consistent.

It’s a small idea with a practical payoff, especially for apps where perceived performance matters as much as real speed.

That’s all for this week. Have any ideas you want to see in the next article? Hit Reply!

Cheers!

Editor-in-chief,

Kinnari Chohan

👋 Advertise with us

Interested in sponsoring this newsletter and reaching a highly engaged audience of tech professionals? Simply reply to this email, and our team will get in touch with the next steps.