WebDevPro #135 MCP Is Redefining How We Expose Backend Capabilities

Crafting the Web: Tips, Tools, and Trends for Developers

Welcome to this week’s issue of WebDevPro!

For years, web development has revolved around a stable contract: define endpoints, shape responses, and let clients call into your system.

That model still works, but it starts to feel strained when the client is no longer just a browser or a mobile app, but an AI system that needs to discover, interpret, and use your backend dynamically.

Most AI integrations today are still improvised. Teams wire up function calling, patch together tool layers, and build thin wrappers around internal APIs. The result, therefore, is predictable: duplication, brittle integrations, and systems that are hard to extend.

MCP, on the other hand, introduces a different approach. Instead of treating AI as an add-on, it defines a standard way to expose capabilities so clients can discover and use them reliably.

This is not just an AI story. It is an architectural shift that web developers should understand early.

Before we get into it, here’s this week at a glance:

From Endpoints to Capabilities

Traditional APIs are built around endpoints. You define routes, attach handlers, and document how clients should call them. MCP shifts the focus from endpoints to capabilities.

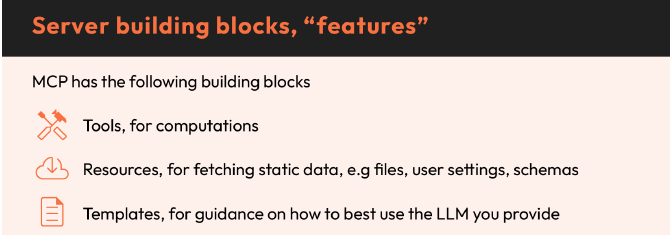

Instead of exposing a set of URLs, you expose:

Tools

Resources

Templates

They form the core building blocks of MCP, separating computation, context, and reusable instruction patterns.

Endpoints assume the client already knows what exists. Capabilities allow the client to discover what is available at runtime.

That removes a lot of implicit coupling between systems. It also reduces the need to hardcode assumptions into every integration.

A Familiar Model, Framed Differently

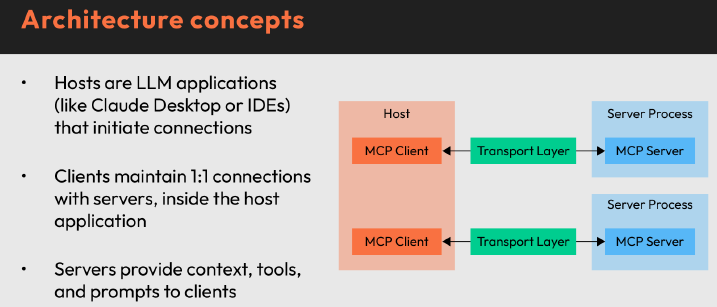

At a high level, MCP follows a structure that will feel familiar:

A server exposes functionality

A client connects and invokes it

A host provides the environment where interactions happen

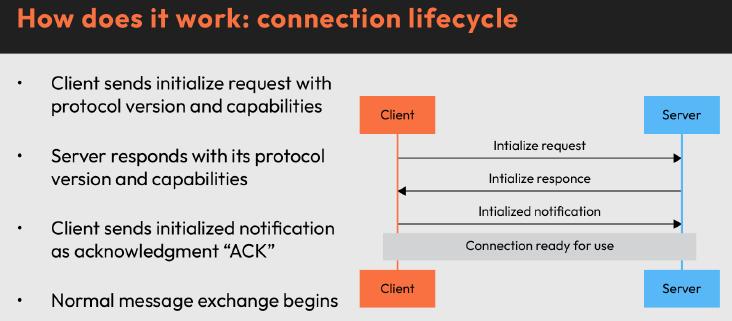

Communication happens through structured messages, with an explicit handshake where both sides exchange capabilities before doing any work. The initialize-initialized handshake ensures both client and server agree on capabilities before any interaction begins.

For web developers, this is closer to:

API discovery combined with schema introspection

Contract negotiation before execution

Runtime awareness instead of static assumptions

The difference is that this model is designed for AI clients that interpret intent rather than strictly follow instructions.

Why This Matters in Practice

The biggest problem MCP addresses is not performance or scale. It is integration friction.

Without a standard:

Each AI integration defines its own schema

Tool selection becomes unreliable

Context handling becomes inconsistent

Systems become harder to compose

The source material highlights three recurring pain points:

Fragmented context and limited windows

Tool and memory integration complexity

Lack of composability across domains

MCP tackles these by standardizing how capabilities are described and invoked. For web developers, this is similar to the role OpenAPI played for REST. It does not replace APIs. It makes them easier to integrate and reason about.

The Real Design Challenge: Clarity Over Cleverness

One of the most practical insights from the material is how much naming and structure influence behavior.

In MCP systems:

Tool descriptions guide selection

Parameter names influence argument extraction

Return shapes affect how results are used

Vague naming leads to incorrect tool calls. Overloaded schemas introduce ambiguity. Overly complex resources increase cost and reduce accuracy.

This is not new, but the consequences are sharper.

In traditional APIs, poor naming slows developers down. In AI-driven systems, it changes how the system behaves.

That forces a shift toward:

Clear, literal naming

Minimal and explicit schemas

Stable, predictable outputs

This is API design discipline applied in a stricter environment.

Transport, Deployment, and the Web Stack

Another reason this matters for web developers is where these systems end up.

Local development may start with simple transports, but production systems move toward HTTP-based communication, aligning with existing infrastructure.

That brings familiar concerns back into play:

Routing and endpoints

Authentication and token validation

Rate limiting and gateway policies

Observability and request tracing

The recommendation to move toward a single HTTP endpoint for MCP traffic reflects a clear alignment with modern backend practices.

It is an extension of the web stack and not just parallel ecosystem.

Authentication Is No Longer Per Request

One subtle but important shift is how authentication is handled.

Instead of validating each request independently, MCP systems often establish trust at the connection or session level, with tokens validated before interaction begins.

That changes how you think about:

Access control

Role mapping

Scope enforcement

It also pushes developers to consider:

Which tools should be publicly accessible

Which require elevated permissions

How to isolate sensitive operations

This is closer to service-level authorization than traditional request-level checks.

From Local Tooling to Production Systems

One of the strengths of the MCP approach is how it spans environments. The same server can:

Run locally for testing

Be inspected through development tools

Be integrated into host environments

Be deployed over HTTP for production use

This continuity reduces the gap between experimentation and deployment.

For web developers, that means fewer environment-specific rewrites, easier validation workflows, and more predictable rollout paths

It also makes it easier to introduce AI-driven capabilities incrementally instead of rewriting entire systems.

Where MCP Fits in a Web Developer’s Mental Model

It helps to place MCP alongside existing concepts rather than treating it as something entirely new.

Think of it as:

An extension of API design for AI-native clients

A structured way to expose backend capabilities

A protocol for discovery, not just execution

It does not replace REST or GraphQL. It complements them by sitting one layer above, where interpretation and routing happen.

That positioning makes it easier to adopt without overhauling your architecture.

Key Takeaways

MCP reframes backend design from static endpoints to discoverable capabilities

Tools, resources, and prompts map cleanly to actions, context, and reusable logic

Clear naming and schema design directly influence system behavior

HTTP-based deployment keeps MCP aligned with existing web infrastructure

Authentication shifts toward session-level trust and scoped permissions

The protocol reduces integration friction by standardizing how capabilities are exposed

MCP is still early, but the direction is clear. AI clients are becoming another consumer of backend systems, and they require more than just endpoints.

For web developers, this is less about learning a new tool and more about recognizing a familiar pattern evolving into something more dynamic.

If you want to explore these ideas in more depth, check out Ship an MCP Server in Python FAST by Christoffer Noring.

Building with AI? We want to hear from you 👀

Take our quick survey and help us shape content around real workflow bottlenecks.

This Week in the News

⚛️ TanStack Start adds experimental React Server Components support: TanStack Start now includes experimental support for React Server Components (RSC), bringing server-first patterns into its full-stack React framework. It’s another sign that RSC is slowly moving from theory into real-world tooling.

🤖 Claude introduces “routines” for repeatable workflows: Claude now supports routines, a way to define repeatable workflows for common tasks. Instead of prompting from scratch each time, developers can structure and reuse sequences, making interactions more consistent and efficient.

🎨 Hugo adds new CSS capabilities: Hugo’s latest updates introduce new CSS-related capabilities, expanding how styles can be handled within the static site generator. The changes aim to simplify styling workflows and give developers more flexibility when working with modern CSS setups.

🧩 GitHub introduces stacked PRs in private preview: GitHub has opened a private preview for stacked pull requests, adding native support for workflows where large changes are split into a chain of dependent PRs. This approach can make reviews more manageable and help teams ship complex features incrementally.

Beyond the Headlines

🎨 Why AI still struggles with frontend development: While AI tools are getting better at generating code, frontend development remains a weak spot. This piece breaks down where things fall apart, especially around layout, styling, and the nuanced decisions that go into building good user interfaces. The bigger takeaway is that frontend work isn’t just about producing code. It involves visual judgment, edge cases, and context that are still hard for AI systems to consistently handle.

🏗️ Rethinking architecture with the vertical codebase: This article explores the idea of a “vertical codebase,” where features are organized end-to-end instead of being split across layers like components, services, and utilities. The approach focuses on grouping everything related to a feature in one place, making codebases easier to navigate and reason about. It’s a shift from traditional horizontal layering toward a structure that prioritizes ownership, clarity, and maintainability as applications grow.

🧹 Mastering ESLint rules for better code quality: This guide takes a deeper look at how to effectively use ESLint rules beyond basic setups. It focuses on understanding, customizing, and applying rules in a way that actually improves code quality instead of just adding noise. The key idea is that linting works best when it reflects team conventions and real-world usage, not just default configurations.

📦 Ten years of JavaScript modules on the web: It’s been ten years since the initial push to bring native JavaScript modules to the web platform. This retrospective looks at how far the ecosystem has come, from early proposals to widespread adoption across browsers and tooling. It’s a reminder of how foundational features evolve slowly, but end up reshaping how we structure and ship web applications.

🎤 Inside npmx, a new way to explore the npm ecosystem: This 50-minute conversation with the developers behind npmx dives into the thinking behind a new way to browse and interact with the npm registry. The project is gaining traction as an alternative approach to discovering and working with packages. It offers a closer look at how tooling around npm is evolving beyond installation into better exploration and developer workflows.

Stop Prompting. Start Speccing.

After a hugely successful Cohort 1, we’re back with the Cohort 2 hands-on workshop!

Here you’ll build a real full-stack application using the same spec-first methodology used by MAANG engineering teams.

20 early bird seats at 40% off. Use code NL40

Tool of the Week

🎨 Extract color palettes directly from images

Choosing the right color palette can be tricky, especially when working with images. Color Thief makes it simple by extracting dominant colors and palettes directly from images using a lightweight JavaScript library.

It’s especially useful for building dynamic UIs, theming applications, or generating color schemes based on user content.

That’s all for this week. Have any ideas you want to see in the next article? Hit Reply!

Cheers!

Editor-in-chief,

Kinnari Chohan

👋 Advertise with us

Interested in sponsoring this newsletter and reaching a highly engaged audience of tech professionals? Simply reply to this email, and our team will get in touch with the next steps.